Regulatory teams do not need another AI chat window. They need one workspace that can retrieve the source, draft against it, preserve the citation chain, and carry that chain forward into review. That is what agentic should mean in regulated work: not a model acting on its own, but a system that can move work from question to draft to monitoring without breaking traceability.

That distinction matters now because the work itself is getting more connected. FDA's QMSR took effect on February 2, 2026. ICH E6(R3) was finalised in January 2025 and adopted by FDA in September 2025. The EU AI Act starts applying to high-risk AI systems from August 2, 2026. EUDAMED obligations are landing module by module under MDR implementation timelines. None of those changes sit in isolation. The answer, the draft, the monitoring signal, and the review note all touch the same evidence chain.

Regulatory work still breaks across too many tools

Most teams still run the workflow in pieces.

They ask the question in one place. They read the source somewhere else. They draft in Word. They copy the citation by hand. They reopen the original document when a reviewer asks where the sentence came from. Then they repeat the same process for the next jurisdiction, the next guidance update, or the next technical file section.

That fragmentation is expensive in exactly the wrong way. It does not only cost time. It weakens the audit trail. If the answer lives in one tool, the draft in another, and the source link in a browser tab or spreadsheet, the person doing the work has to manually preserve the connection between them.

For regulated work, that connection is the work.

The point is not to get text faster. The point is to keep every claim tied to the article, annex, clause, guidance paragraph, or agency publication that supports it. A regulatory AI system that cannot preserve that chain end to end is still leaving the hard part to the human.

What agentic should mean in regulatory work

"Agentic" is already becoming a vague word. In regulatory work, it needs a narrower meaning.

It should not mean an autonomous system making uncontrolled decisions. It should mean a system that can complete a bounded chain of tasks while keeping the source record intact. Ask a question. Retrieve the relevant passages. Draft the answer. Carry those citations into the next draft. Monitor for changes that affect the same topic. Compare one jurisdiction against another in a reusable structure. Keep the provenance visible at every step.

That is different from a generic assistant that answers one prompt at a time.

A prompt-response tool can be useful. It can summarise a document or suggest wording. But if every next step starts from zero, the user still has to rebuild the workflow manually. In practice, that means:

- re-checking the source after every answer

- re-inserting citations during drafting

- re-reading updates when a guidance changes

- redoing the comparison work every time a new question comes up

An agentic workspace should reduce that repetition. It should keep the work object, the source context, and the citation trail together.

One question should produce a usable answer, not a chat reply

The first test is simple: can the system answer a real regulatory question with citations a reviewer can verify?

If the answer is only a paragraph of plausible text, the user still has to do the real work after the answer appears. They still need to reopen the regulation, confirm the article number, verify the interpretation, and decide whether the output is safe to reuse. That is not a completed task. It is just a faster first draft.

The threshold for regulated work is higher. The answer should already carry the source chain with it. The reviewer should be able to inspect what passage was retrieved, what claim it supports, and whether the interpretation is defensible.

That is why cited retrieval is the minimum bar, not an optional feature. A model that generates persuasive text without a verifiable source chain is still asking the RA professional to shoulder the risk.

Try this in RegAid: What does MDR Article 61 require for clinical evaluation?

Drafting has to preserve the evidence, not restart it

The second test is what happens after the answer.

Most regulatory work is not finished when the question is answered. The answer has to become something: a technical documentation section, an internal recommendation, a change assessment note, a submission paragraph, a CER update, or a response to a reviewer comment.

That is where most AI systems fall apart. They answer in one surface and draft in another. The source proof gets thinner as the work moves forward.

In a real regulatory workflow, drafting needs to inherit the evidence, not re-create it. If the system has already retrieved the relevant span, the next draft should carry that span forward. The writer should be able to inspect the underlying source without leaving the drafting flow.

This matters for routine work and high-risk work alike. A PMS statement, a CER section, a classification rationale, or a cross-jurisdiction comparison all become easier to defend when the drafting surface stays connected to the supporting source.

That is also the cleaner answer to the usual AI governance question. The issue is not whether the system produced words. The issue is whether the system preserved the basis for those words in a way a reviewer can inspect.

Monitoring should feed the same workspace

The third test is what happens when the source changes.

Regulatory monitoring is often still treated as a separate product category. One tool watches guidance. Another tool helps write. A third stores the draft. But that split does not match the workflow. Monitoring only matters because it changes drafting, review, filing, and internal decisions downstream.

If a new MDCG document lands, or FDA issues a final guidance, or an AI Act implementation provision becomes concrete, the question is not simply "was there an update?" The question is "what part of our current work does this change?"

That means the monitoring surface should not stop at the alert. It should connect to the answer history, the ongoing draft, and the project that is affected. The signal should arrive with the source attached, and it should be usable immediately inside the same workspace where the team is already writing and reviewing.

This is where a lot of "regulatory intelligence" tooling still feels one generation behind. It gets the update into the inbox but leaves the downstream interpretation and drafting workflow fragmented.

Comparison work is where the time really goes

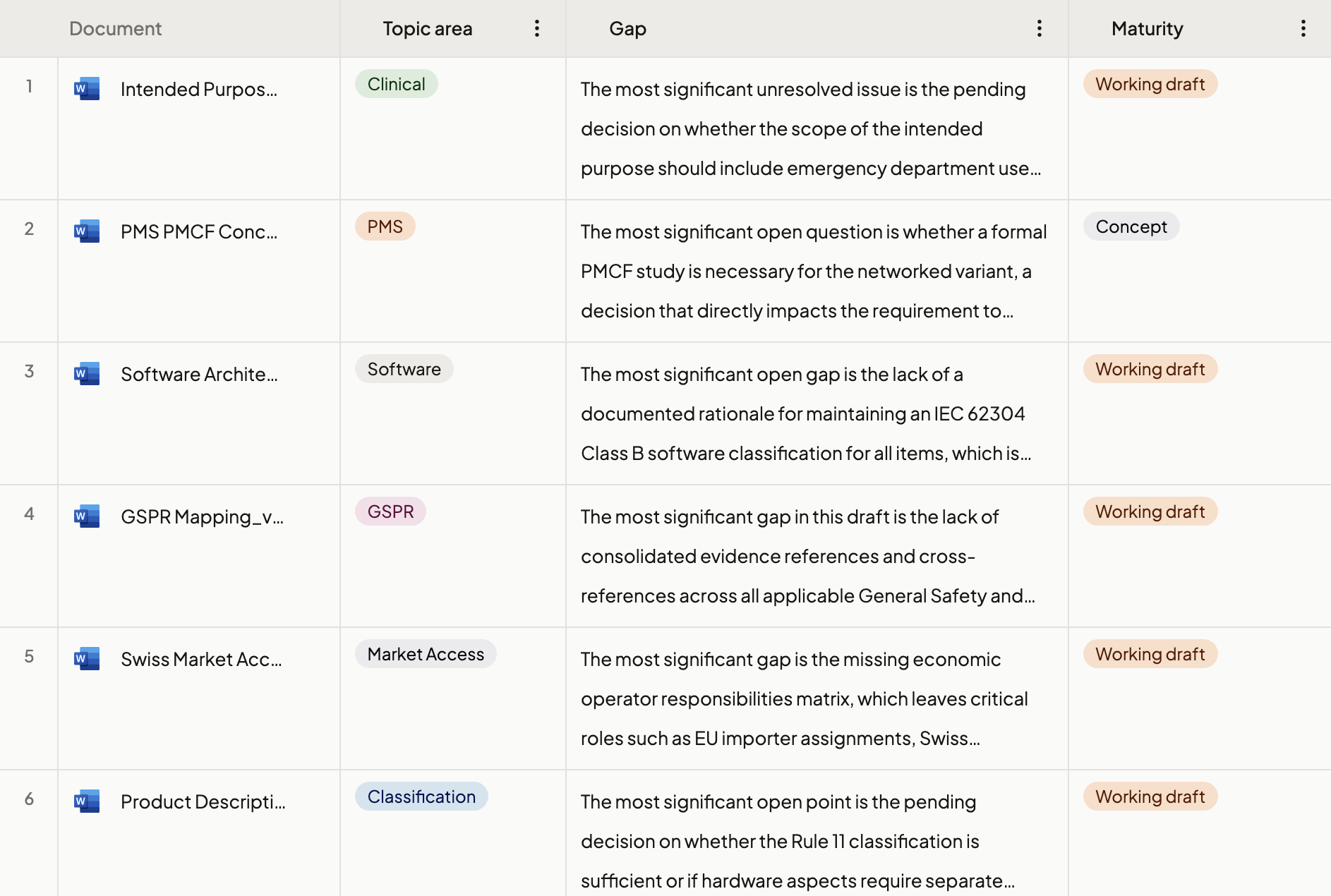

The fourth test is comparison.

This is the work that burns senior time: FDA versus EMA, MDR versus QMSR, old guidance versus final guidance, one tech file versus another, one CER section against a new expectation. The answer is rarely in one paragraph. It lives across rows, columns, and recurring review questions.

An agentic workspace should be able to handle that structure directly. It should not force the user to export the work into a spreadsheet the moment the task stops being linear. If the system can retrieve, compare, and preserve evidence inside a reusable grid, the team can ask new questions on top of the same evidence base instead of rebuilding the work item from scratch.

That is a more realistic definition of productivity in RA. Not "the AI wrote a paragraph." Instead:

- the comparison ran inside one reusable workspace

- each cell stayed linked to source

- the next reviewer could audit the result

- the same structure could be reused on the next product, market, or variation

This is also where the category starts to look less like "AI assistant" and more like operating environment.

What changes when the workspace is the product

Once the workspace becomes the unit of product design, a few things change.

First, the user no longer has to choose between speed and defensibility. The same step that saves time also preserves the citation chain.

Second, different modes of work stop competing with each other. Search, drafting, monitoring, and comparison are not separate product silos. They are views on the same evidence and the same work object.

Third, adoption becomes easier to justify internally. A team can evaluate one system on operational questions that actually matter:

- Does it keep the source chain intact?

- Can a reviewer inspect the evidence without leaving the workflow?

- Can the same work item continue from answer to draft to update?

- Can the system compare across jurisdictions without flattening the nuance?

That is a stronger adoption story than generic "AI productivity." It is specific, operational, and auditable.

The future is not AI answers. It is a cited operating model

Regulatory teams will keep using models. That part is settled.

The real question is what the model is embedded in. A chat interface alone is too shallow for the work. A document repository alone is too passive. A monitoring feed alone is too disconnected. A cited operating model brings the parts together.

That is what we mean by an agentic workspace for regulatory work. Not autonomous claims. Not marketing abstraction. A working environment where the system can retrieve, draft, compare, monitor, and preserve the evidence chain across the full flow of work.

The premium version of regulatory AI will not win because it sounds futuristic. It will win because it makes the source chain easier to keep intact than to lose.

Key takeaways

- Regulatory teams do not need a faster chat tool; they need a workspace that preserves the citation chain from question to final draft

- In regulated work, "agentic" should mean bounded workflow execution with traceability, not uncontrolled autonomy

- The answer, the draft, the monitoring alert, and the comparison view should all operate on the same evidence base

- Monitoring is only valuable when it feeds the live drafting and review workflow that the update affects

- The winning regulatory AI products will feel less like single prompts and more like operating environments for cited work

How RegAid helps

RegAid brings cited answers, source-grounded drafting, monitoring, and structured comparison into one regulatory workspace for pharma and medtech teams. Ask a question, inspect the source span, draft with the chain intact, track updates, and compare across jurisdictions without moving the work into disconnected tools. Start with your own live regulatory question at regaid.ch.